An AI That Predicts a Neighborhood’s Wealth From Space

Wired Magazine

06.28.2017

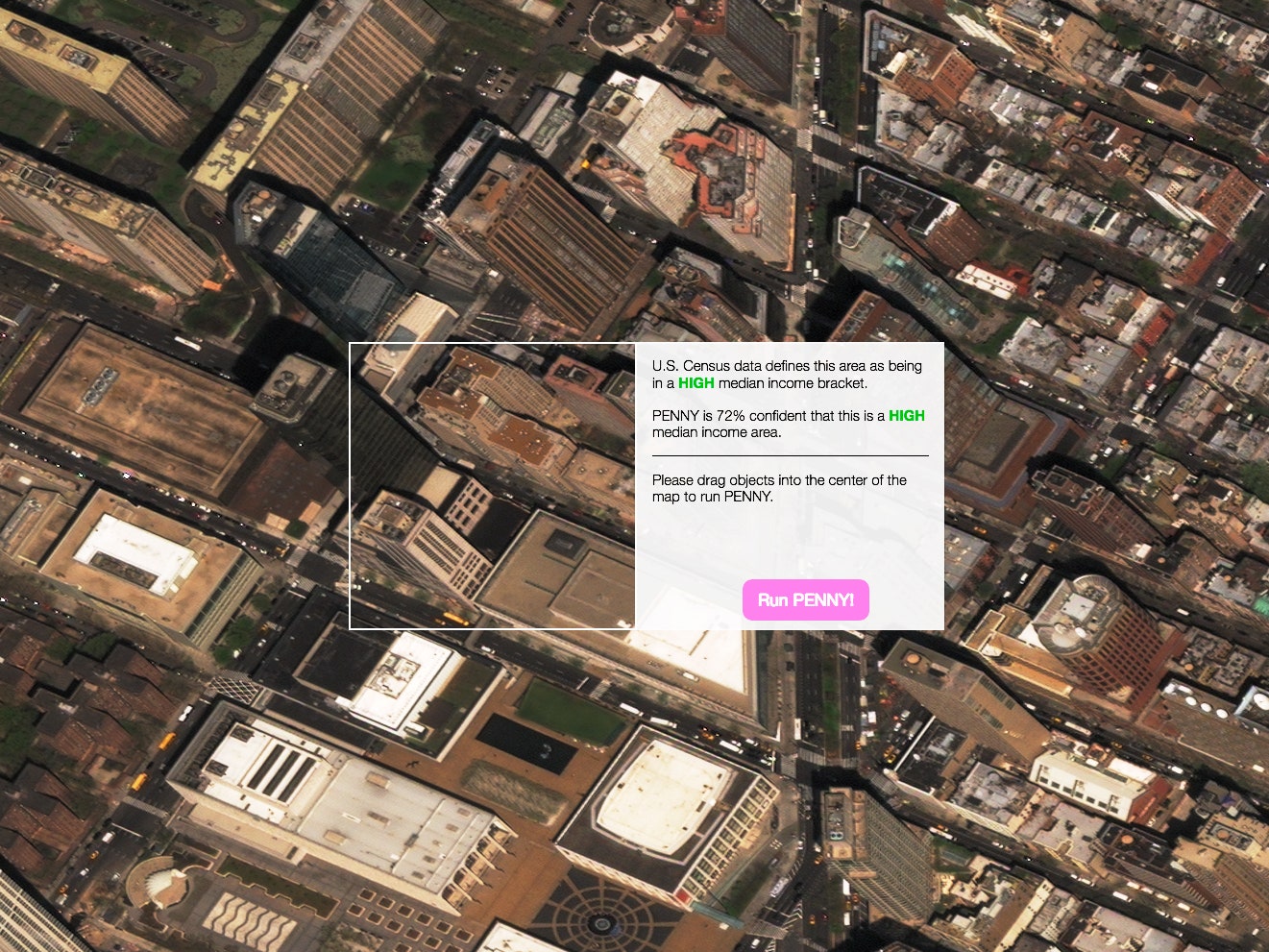

Penny provides a glimpse at how AI and machine learning make sense of a city. ‘It’s not for deciding whether to put a hedgerow in your yard, it’s to help us understand how machines make sense of our world,’ says Jordan Winkler, the product manager for DigitalGlobe, the company that provided the imagery Penny uses. But he says Penny is mostly about getting people to think about how AI and machine learning actually work—or don’t.

Aman Tiwari, a computer scientist at Carnegie Mellon University, trained the AI by overlaying census data on high-resolution satellite imagery of New York and feeding it through a neural network. (He did the same thing with census data and satellite imagery of St. Louis, but each model can only predict household incomes in its respective city.) The AI started to associate visual patterns in the urban landscape with income, and different objects and shapes seemed to be highly correlated with different income levels—parking lots with low income, green spaces with high income, that sort of thing. Tiwari worked with data visualization studio Stamen to create an interface to probe those correlations. The UI lets you drag and drop baseball diamonds, solar panels, buildings, and other things all over town. The point isn’t to design a city, but to learn more about what AI can, and can’t, do.