PENNY Machine Learning Tools with Digital Globe

We trained an AI to predict wealth from space

Penny is an AI built by Stamen Design and researchers at Carnegie Mellon University on top of GBDX, DigitalGlobe’s analytics platform. This simple tool is designed to help us understand what wealth and poverty look like to an artificial intelligence built on machine learning using neural networks. The tool lets you adjust the landscape of a city by adding and removing urban features like buildings, parks, and freeways in high-resolution satellite imagery.

The interface lets you explore how different kinds of features of a city make it look wealthy or poor to an AI—and in the process poke at the black box of machine learning, see what makes it tick, where you think it makes sense to you, and where it might not.

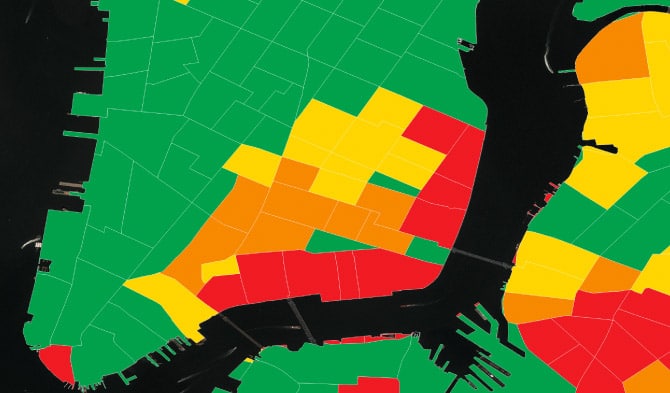

We built Penny using income data from the U.S. Census Bureau, overlaid on DigitalGlobe satellite imagery. Then we gave this information to a neural network, training it to predict the average household incomes in any area in the city using both of these layers of data from the census and the satellite. The AI looked for patterns in the imagery that correlate with census data. Over time, the neural network learned what patterns best predicted high and low income levels. We can then ask the model what it thinks income levels are for a place, just based on looking at a satellite image.

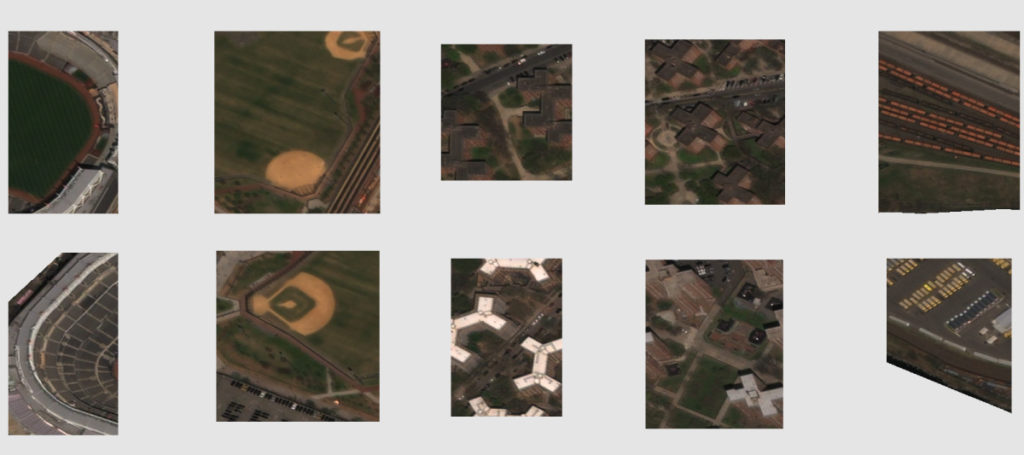

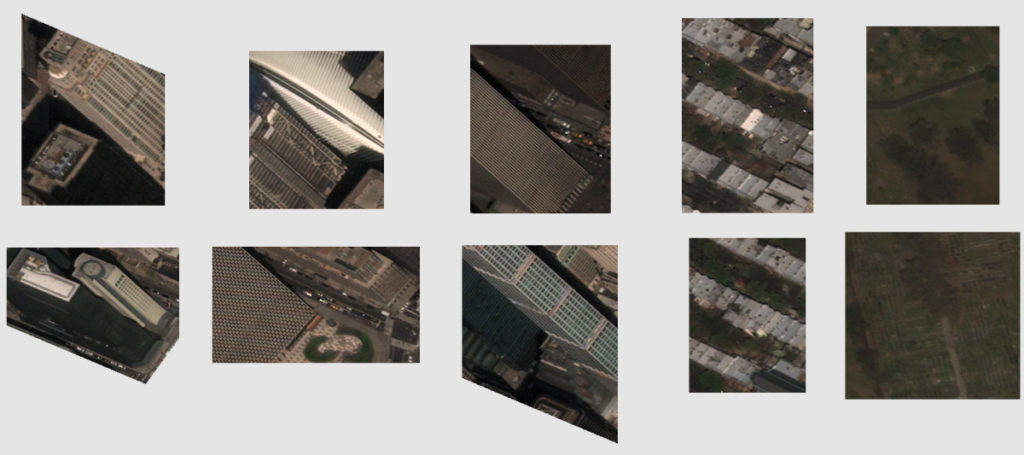

After Penny was let loose to make predictions about household income just based on satellite images, we began taking a close look at areas in New York City that Penny predicted had high or low incomes. It is clear that Penny learned that there are some patterns that are correlated in the imagery and the census data. Different types of objects and shapes seem to be highly correlated with different income levels. For example, lower income areas tend to have baseball diamonds, parking lots, and large similarly shaped buildings (such as housing projects). In middle income areas we see more single family homes and apartment buildings. Higher income areas tend to have greener spaces, tall shiny buildings, and single family homes with lush backyards.

@stamen is the best team I ever collaborated with. Jordan Winkler, PhD Geospatial Big Data Ecosystem Product Manager, Digital Globe